Improving Drupal Site Performance - Part II

Part 1 of this 2-part post on improving the performance of a Drupal site covered some simpler steps. Here, we will cover more advanced items that can help improve performance and in particular, help when the main traffic to your site is authenticated users.

Part 1 of this 2-part post on improving the performance of a Drupal site covered some simpler steps. Here, we will cover more advanced items that can help improve performance and in particular, help when the main traffic to your site is authenticated users.

MySQL Optimization

The majority of drupal sites, including those running in hosted environments such as Acquia, run on MySQL. Given Drupal 7 uses the InnoDB storage engine by default, there a few MySQL and InnoDB configuration options that can make the world of difference.

Many of the suggestions here may not be applicable when running in a some hosted environments since the hosting provider may not provide the ability to modify MySQL’s configuration. However, its worth opening a dialogue with your hosting provider if you feel modifying any of these settings may improve your site’s performance.

InnoDB Buffer Pool

The InnoDB buffer pool can be thought of as another caching layer; between the file system and the database. However, it is a very intelligent cache and InnoDB has many complex algorithms in place to manage its contents. The size of the InnoDB buffer pool is controlled by the innodb_buffer_pool_size parameter.

In a perfect world, enough memory will be allocated for the InnoDB buffer pool so that all your data can fit in it. One way to calculate how much disk space a schema is currently using is with a query similar to the following:

SELECT table_schema,

table_schema,

Concat(sum(Round(data_length / ( 1024 * 1024 ), 2)), 'Mb')

AS data_length_mb,

Concat(sum(Round(index_length / ( 1024 * 1024 ), 2)), 'Mb')

AS index_length_mb,

Concat(sum((Round(Round(data_length + index_length) / ( 1024 * 1024 ), 2))), 'Mb')

AS total_size_mb

FROM

information_schema.TABLES

WHERE

table_schema = '?'

GROUP BY

table_schema;

Running that query on a teeny tiny schema will produce output similar to:

*************************** 1. row ***************************

table_schema: drupal

data_length_mb: 18.82Mb

index_length_mb: 13.76Mb

total_size_mb: 32.00Mb

If we wanted to allocate enough memory for the InnoDB buffer pool to contain all that data, we would set innodb_buffer_pool_size to 512M (this will easily cover this tiny schema).

The efficiency of the InnoDB buffer pool can be monitored over time by tracking the output of the SHOW INNODB STATUS command. In particular, you will see there is a section dedicated to the InnoDB buffer pool which shows the buffer pool hit rate.

Query Cache

The MySQL query cache stores complete result sets for SELECT statements. Before parsing a SQL query, MySQL checks to see if it is contained within the query cache. This is a pretty crude check and if the query differs in the slightest, its considered a cache miss. If there is a cache hit though, results can be returned immediately resulting in much improved query performance times.

While there is some debate from various MySQL experts on how scalable MySQL’s query cache is, we do find that its typically worth enabling this cache for a drupal site since many drupal sites that we see have read-heavy workloads with many complex SELECT queries.

If you do choose to enable the query cache, the cache hit rate can be calculated by monitoring using various status variables as so:

Qcache_hits / (Qcache_hits + Com_select)

Another status variable to monitor is Qcache_inserts. If Com_select and Qcache_inserts are relatively close in size, then it is indicative that a lot of cache misses are happening.

By default, the query cache is disabled in MySQL (at least from 5.5 onwards). If we do decide to enable this cache for a site, we limit its size to about 50 MB. The query cache size configuration is controlled by the query_cache_size parameter.

On Disk Temporary Tables

Many times the MySQL execution engine is required to create a temporary table (such as when needing to do a sort for an ORDER BY operation). However, in certain scenarios, this temporary table will be created on disk as opposed to in-memory. MySQL creates a temporary table on disk when:

- a query that uses a BLOB columns needs a temporary table.

- the size of the temporary table is larger than either max_heap_table_size or tmp_table_size.

If MySQL needs to create a temporary table on disk, it creates a MyISAM table. As you can imagine, this can cause huge degradation in performance; especially if running in an environment with poor I/O performance.

Ideally, BLOB types should be avoided in queries if possible but since this is Drupal, we may not have the luxury of rewriting the query without resorting to ugly hacks like rewriting SQL on the fly. Thus, there are a few options that can help a little.

The first is to allocate more memory for in-memory temporary table creation. This is done by modifying the max_heap_table_size and tmp_table_size parameters. This is far from ideal and we don’t typically recommend doing this but its worth experimenting with if you are seeing many temporary tables on disk being created in your environment.

The second option is to configure MySQL to create on-disk temporary on a RAM disk. This means the MyISAM temporary table is essentially created in-memory. This technique can be highly beneficial and if a site is having performance problems due to on-disk temporary tables, implementing this option can prove beneficial. Note that many hosting providers such as Acquia already do this.

To monitor how many on-disk temporary tables are being created, keep an eye on the Created_tmp_disk_tables and Created_tmp_tables status variables.

Query Optimization

A lot of benefit can be achieved by tuning individual problematic SQL queries. The first step in this process is to identify what queries are problematic. The MySQL slow query log is a great tool to aid in this process. Configure MySQL to log all queries that are not using indexes and that are taking longer than 1 second and let you your site run for a day or two. After a day or two, pt-query-digest can then be used on the slow query log to easily highlight the most problematic queries.

Another option for identifying problematic queries is to use the devel module. This module can show you all the queries that are executed as part of loading a page and additionally, what the execution time of each individual query was. This can help you identify if any problematic SQL query is causing page load times to increase.

Once problematic queries have been identified, the next step is to view their execution plan in MySQL. This is achieved using the EXPLAIN command in MySQL. Now whole books have been written on query optimization by many people smarter than myself so I’m not going to go into much detail here.

In general, assuming that you cannot modify the SQL that is being generated by Drupal, you want to do some of the following:

- avoid temporary tables being created. Strategies for avoiding this include creating an index that satisfies the sort order of the query i.e. includes all the columns in the ORDER BY clause in the correct order

- avoid full table scans. Creating an index on the predicates in a query can help with this depending on the selectivity of predicates in the query.

- use covering indexes. A covering index is an index that never needs to perform a table lookup but can satisfy a query completely from the index. This can offer large performance advantages.

Please note the above are very general suggestions. If you are interested in learning more about query optimization, I recommend High Performance MySQL and Relational Database Index Design and the Optimizers for some deep coverage of this topic.

InnoDB I/O Configuration

What InnoDB does on transaction commit is controlled by innodb_flush_log_at_trx_commit. In order to ensure ACID compliance the innodb_flush_log_at_trx_commit variable must be set to 1 (this is also the default value). When set to 1, InnoDB performs a fsync() system call on transaction commit.

Some sites may choose to loosen the D (durability) guarantees provided by InnoDB to speed up write performance. We do not recommend that this setting be modified. If writes are becoming a bottleneck for your site and losing some data is not considered an issue, then modifying this configuration parameter may be a viable option. Typically though, its best to leave this at its default.

The innodb_flush_method system variable controls how data is written to disk by InnoDB. Setting this to O_DIRECT means that the file system cache will be by-passed and InnoDB will write directly to disk. This prevents double-buffering from happening. Modifying this variable from its default is not guaranteed to always improve performance so if if you do plan on changing this setting, its worth making sure you have a performance test you can run to verify it improves performance of your site in a positive manner.

Use Solr for Search

Many sites depend heavily on the search functionality provided by Drupal. The default search module provided with Drupal uses the underlying database for storing a search index and for performing search requests. While this works for small sites with little search traffic, it can become a performance problem when many users start performing complex searches.

Apache Solr is a tried and trusted open source search server used by many of the largest sites around today. Many modules exist for integrating Solr with Drupal. Acquia also offers Solr as a service leading to easy deployment for existing Acquia customers.

We typically use the Acquia sponsored Drupal module since the majority of our clients are deployed within the Acquia Network. Another option is the search API and the implementation of that API that uses Solr.

Offloading search requests to Solr can make a dramatic difference to search performance for a heavily trafficked site; in particular a site with high search traffic.

Alternative Field Storage Modules

The mongodb project for drupal provides a number of integration points for this NoSQL database. I have personally tested the field storage implementation from a performance point of view and have noticed good results. For the field storage module, the traditional SQL implementation maintains a separate table per field. In the MongoDB field storage module, a single document is maintained for each entity and this document contains all the fields for that entity. Thus, the need for joins when retrieving the fields for an entity are removed and this also means that there is only 1 location that needs to be written to when adding a new entity.

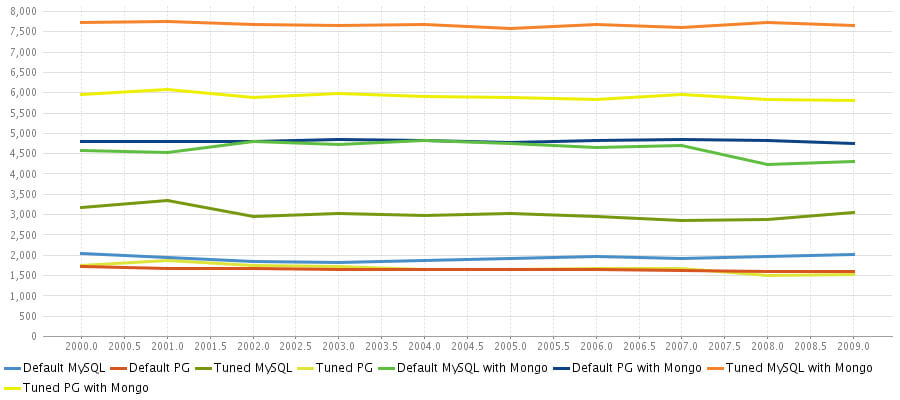

The numbers I observed when testing various field storage modules are shown in the image below.

As you can see, Drupal running on a MySQL database using MongoDB for field storage comes out on top by a nice margin. For the full article and details on the benchmark, please see my personal blog.

While MongoDB shows promise, for many sites deployed in various hosting environments, its just not an option. Luckily, the MongoDB project is not the only one to offer an alternative field storage module.

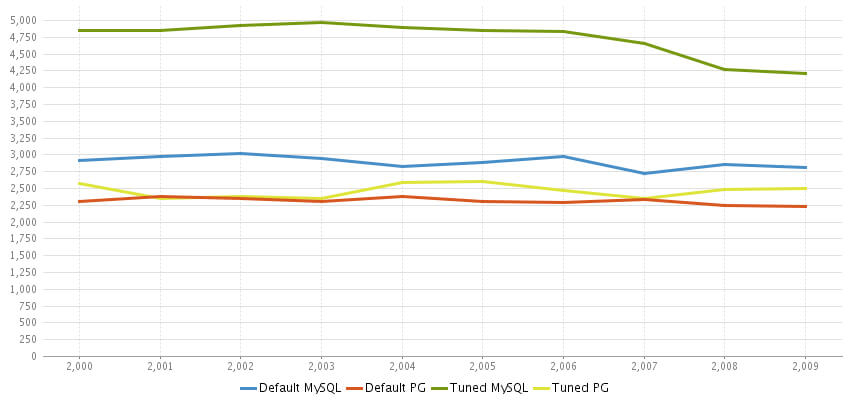

An alternative field storage module that uses the underlying SQL database exists. This module does not store revisions for fields so if that is acceptable for your site, this module can improve performance nicely. Again, results running tests with this module are reproduced below.

You can see that with this module, the numbers we achieve with MySQL are a noticeably improved. For more details on tests with this module, see my personal blog.

Additional Suggestions

AuthCache

AuthCache is an interesting idea for improving the performance of sites whose traffic consists mostly of authenticated users. The one significant downside to this module is that additional development effort may be required in order to see the major performance benefits that are possible. It is not a simple plug and play module but it seems like a promising solution if you are willing to put the effort in.

Edge Side Includes

Edge side includes is a language to include fragments of web pages in other web pages. The esi module is another module that serves the needs of sites looking to improve performance when traffic consists moslty of authenticated users. We made extensive use of ESI when building the new application for the MTA in New York. Many more details on how we used ESI for this project are included in the webinar we delivered on the subject with Acquia.

CDN

The CDN module for Drupal provides Content Delivery Network integration for your Drupal site. Various CDN servers can be used such as Amazon CloudFront or MaxCDN. Articles exist on configuring this module with Amazon CloudFront and for the low setup cost involved, the CDN module can prove extremely beneficial. One of our readers of our last post also mentioned this module in a comment.

Yottaa

An interesting new beta plugin has recently been released by Yottaa that looks promising. This plugin applies Yottaa’s site optimizer technology in a manner specific to Drupal sites and can deliver significant performance improvements. An additional benefit if you are an Acquia customer is that Yottaa is already offered as an add-on in the Acquia Network.

Conclusion

While not every site may need to optimize performance to the extent we discuss here, most sites will benefit from some optimization. Make sure your development team is aware of these options and has a plan that will accommodate regular usage and unanticipated spikes. We do hope this series has proven useful and if people have any other suggestions for techniques to use, please feel free to leave a comment and let us know!